实战-nfs动态供给安装(yaml方式)(测试成功)-20220813

实战:nfs动态供给安装(yaml方式)(测试成功)-2022.8.13

目录

[toc]

环境

- 实验环境

实验环境:

1、win10,vmwrokstation虚机;

2、k8s集群:3台centos7.6 1810虚机,1个master节点,2个node节点

k8s version:v1.22.2

containerd://1.5.5- 实验软件

链接:https://pan.baidu.com/s/1oOkOtrvWWRtDiWplK5CUPw

提取码:9prr

0.安装nfs服务(过程省略)

参考

https://onedayxyy.cn/docs/k8s-nfs-install-helm

1.上传nfs插件到master节点并解压

[root@k8s-master ~]#ll -h nfs-external-provisioner.zip

-rw-r--r-- 1 root root 1.7K Jun 28 18:13 nfs-external-provisioner.zip

[root@k8s-master ~]#unzip nfs-external-provisioner.zip #解压

Archive: nfs-external-provisioner.zip

creating: nfs-external-provisioner/

inflating: nfs-external-provisioner/class.yaml

inflating: nfs-external-provisioner/deployment.yaml

inflating: nfs-external-provisioner/rbac.yaml

[root@k8s-master ~]#cd nfs-external-provisioner/

[root@k8s-master nfs-external-provisioner]#ls #包含文件如下

class.yaml deployment.yaml rbac.yaml

[root@k8s-master nfs-external-provisioner]#2.修改对应yaml文件

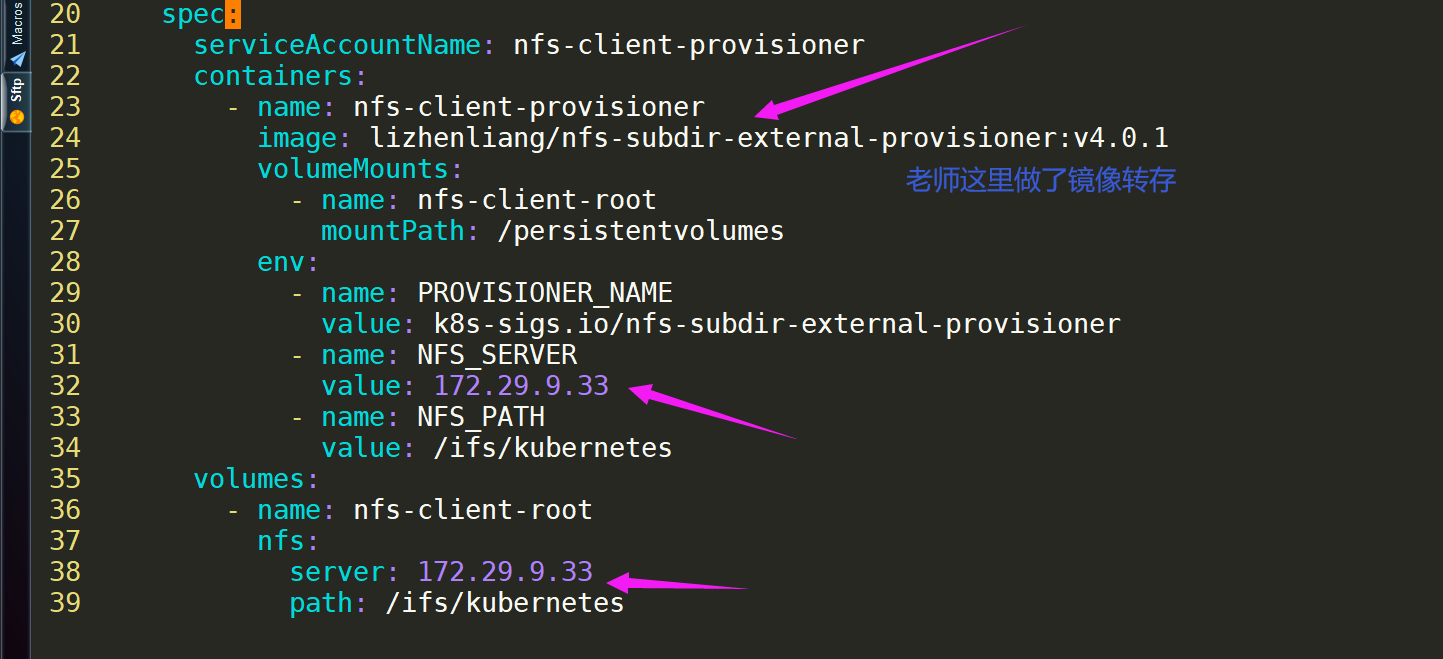

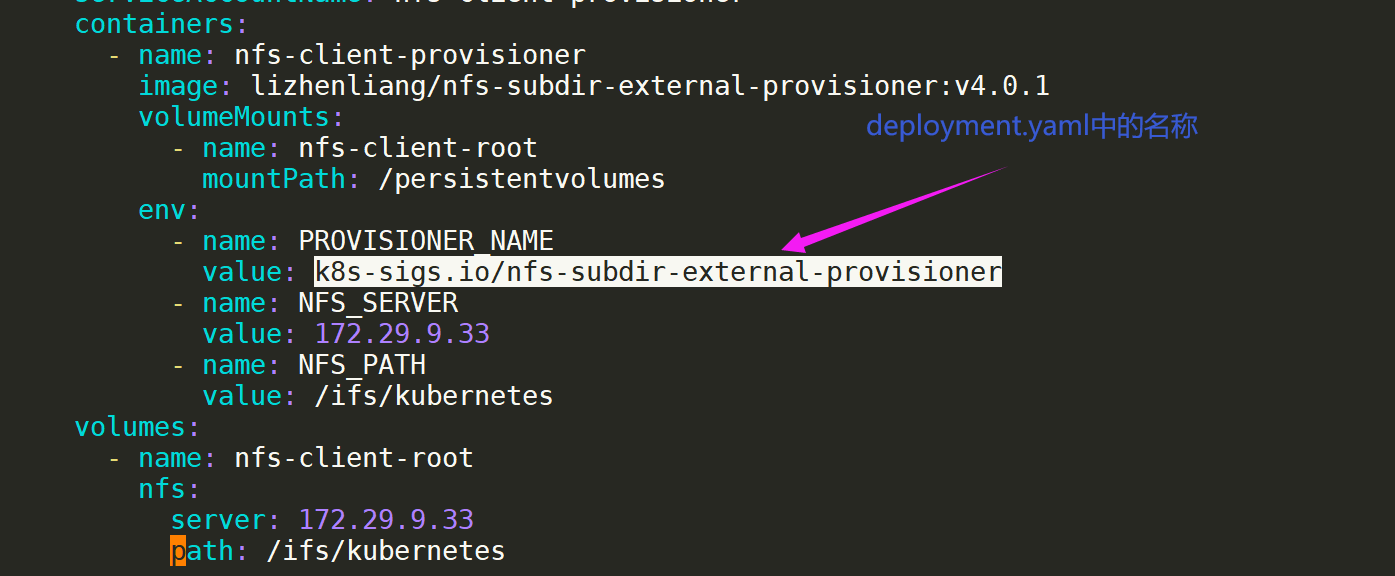

1、修改deployment.yaml

[root@k8s-master nfs-external-provisioner]#ls

class.yaml deployment.yaml rbac.yaml

[root@k8s-master nfs-external-provisioner]#vim deployment.yaml

lizhenliang/nfs-subdir-external-provisioner:v4.0.1

#说明,这个yaml文件只需要修改nfs server ip和存储目录就好,其他地方不需要修改。

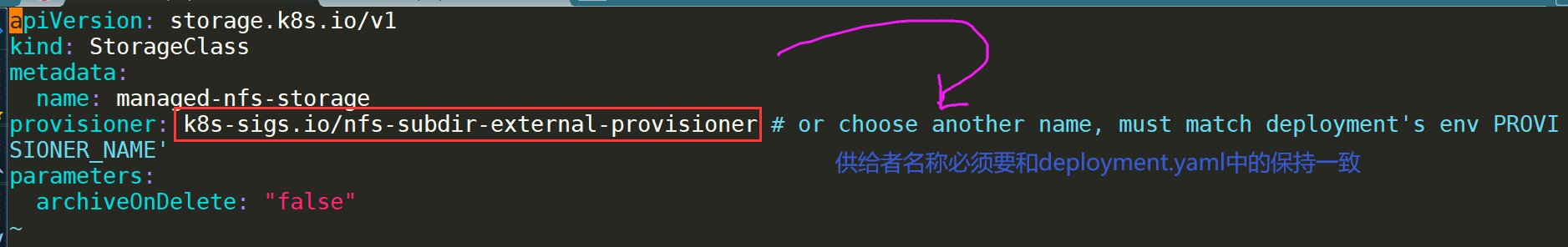

2、查看下class.yaml(本次不需要更改其他配置)

3.apply下并查看

[root@k8s-master nfs-external-provisioner]#ls

class.yaml deployment.yaml rbac.yaml

[root@k8s-master nfs-external-provisioner]#kubectl apply -f .

storageclass.storage.k8s.io/managed-nfs-storage created

deployment.apps/nfs-client-provisioner created

serviceaccount/nfs-client-provisioner created

clusterrole.rbac.authorization.k8s.io/nfs-client-provisioner-runner created

clusterrolebinding.rbac.authorization.k8s.io/run-nfs-client-provisioner created

role.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner created

rolebinding.rbac.authorization.k8s.io/leader-locking-nfs-client-provisioner created

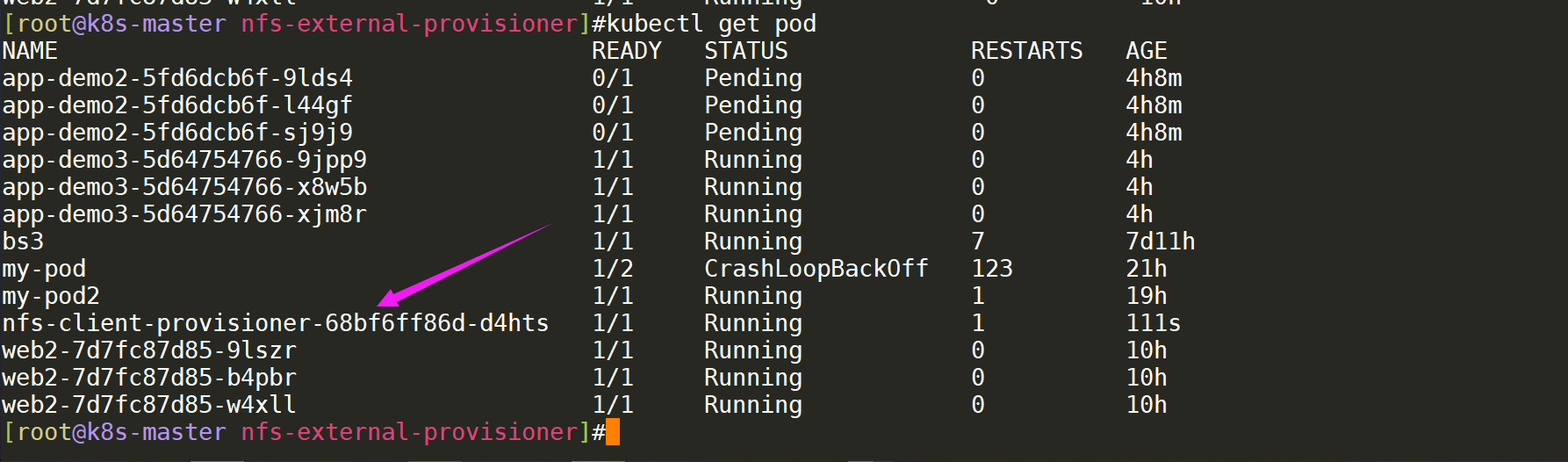

[root@k8s-master nfs-external-provisioner]#kubectl get pod

查看:

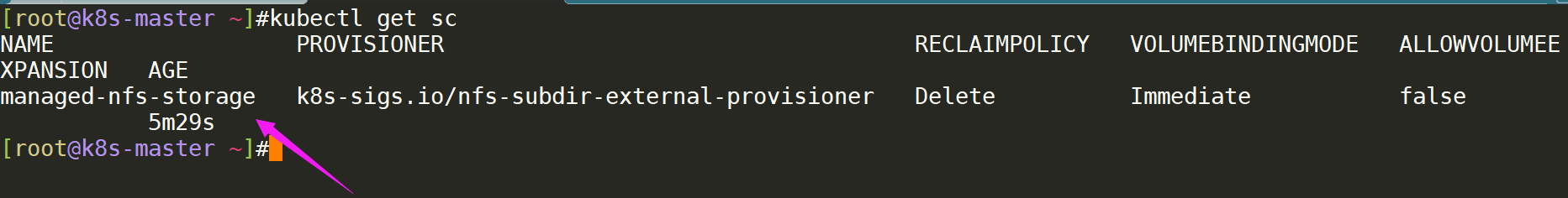

kubectl get sc # 查看存储类

4.验证效果

[root@k8s-master ~]#cp deployment2.yaml deployment-sc.yaml

[root@k8s-master ~]#vim deployment-sc.yaml #创建pvc,deployment资源

apiVersion: apps/v1

kind: Deployment

metadata:

name: app-demo-sc #修改deployment名称

spec:

replicas: 3

selector:

matchLabels:

app: web

strategy: {}

template:

metadata:

labels:

app: web

spec:

containers:

- image: nginx

name: nginx

resources: {}

volumeMounts:

- name: data

mountPath: /usr/share/nginx/html

volumes:

- name: data

persistentVolumeClaim:

claimName: app-demo-sc #修改pvc名称

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: app-demo-sc #修改pvc名称

spec:

storageClassName: "managed-nfs-storage" #添加这行信息,就是上面kubectl get sc查看的那个名称

accessModes:

- ReadWriteMany

volumeMode: Filesystem

resources:

requests:

storage: 15Gi- 看下目前剩余可使用空间

15G已经被使用了,也就没有足够的资源了。

- 直接apply并查看效果

[root@k8s-master ~]#kubectl apply -f deployment-sc.yaml

deployment.apps/app-demo-sc created

persistentvolumeclaim/app-demo-sc created

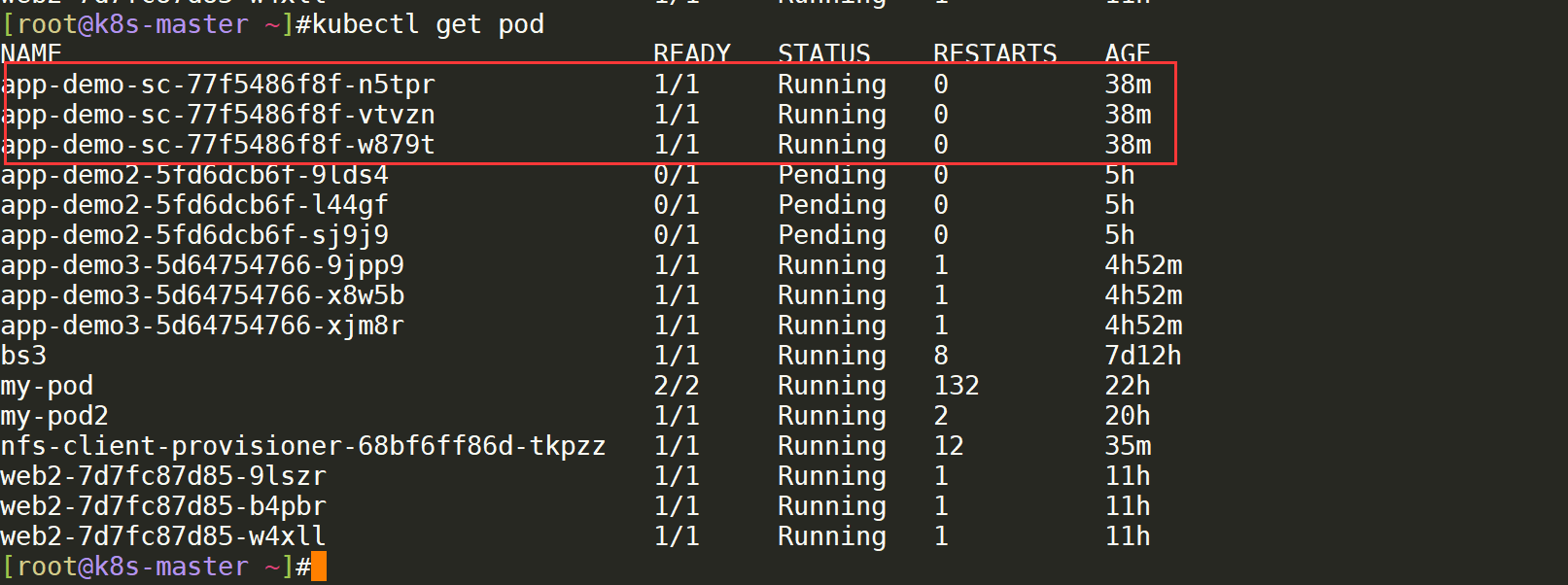

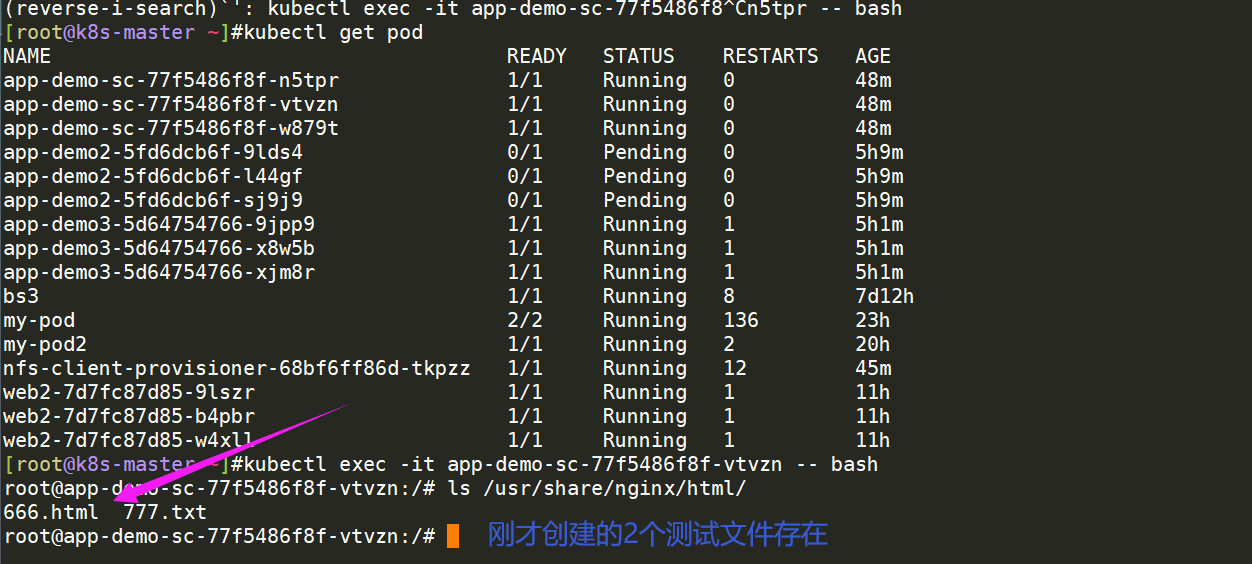

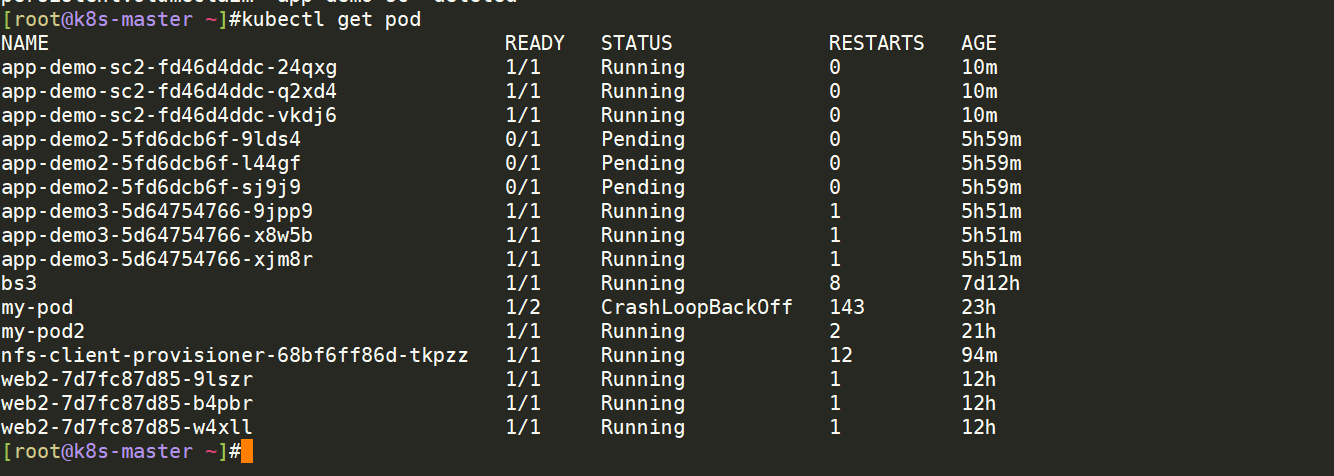

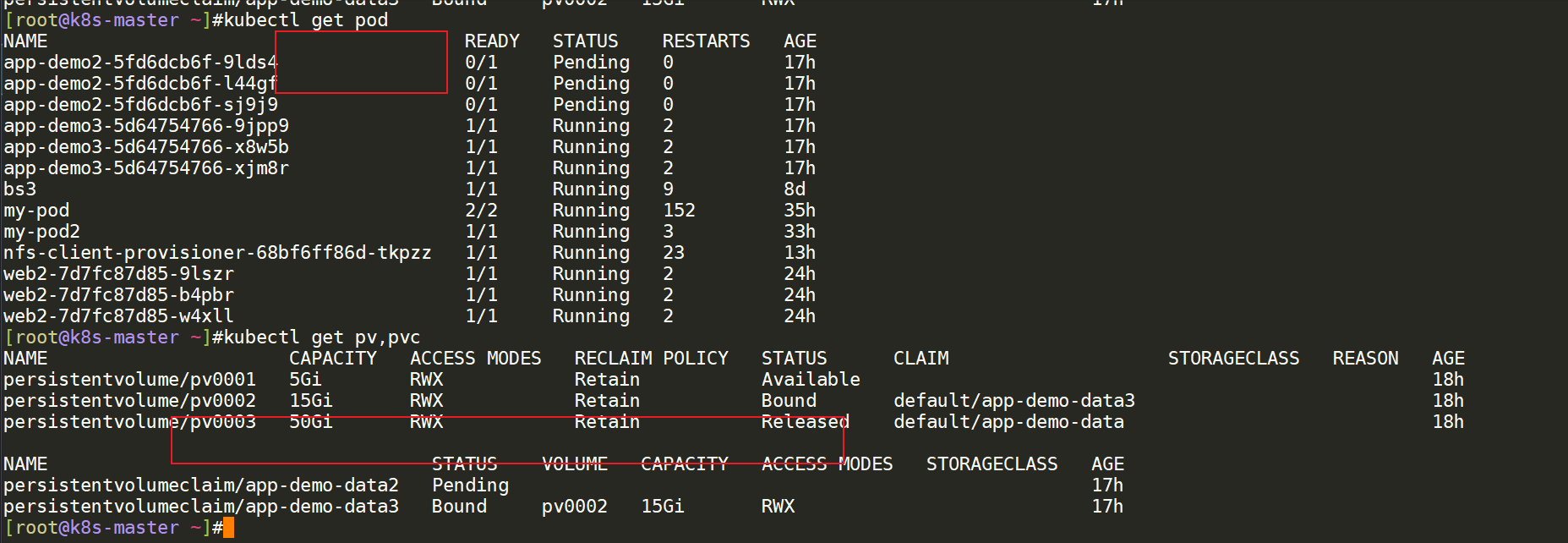

[root@k8s-master ~]#kubectl get pod

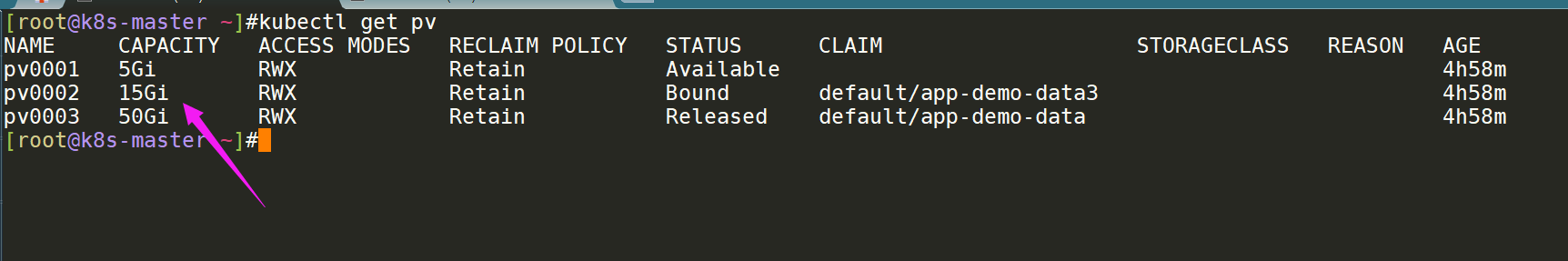

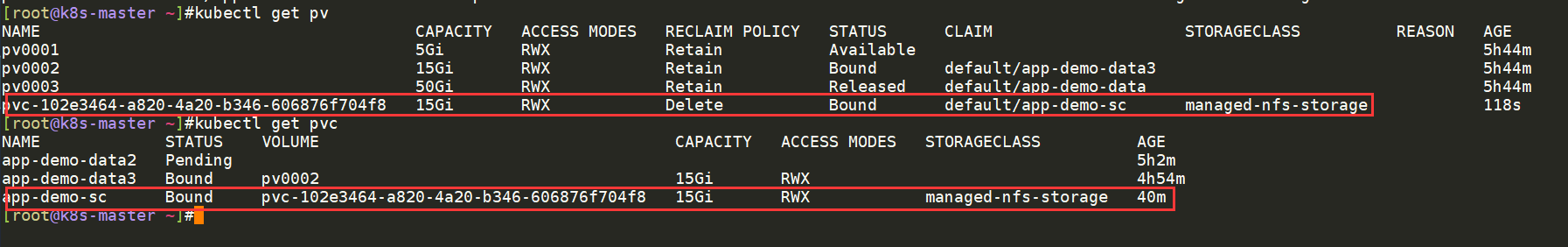

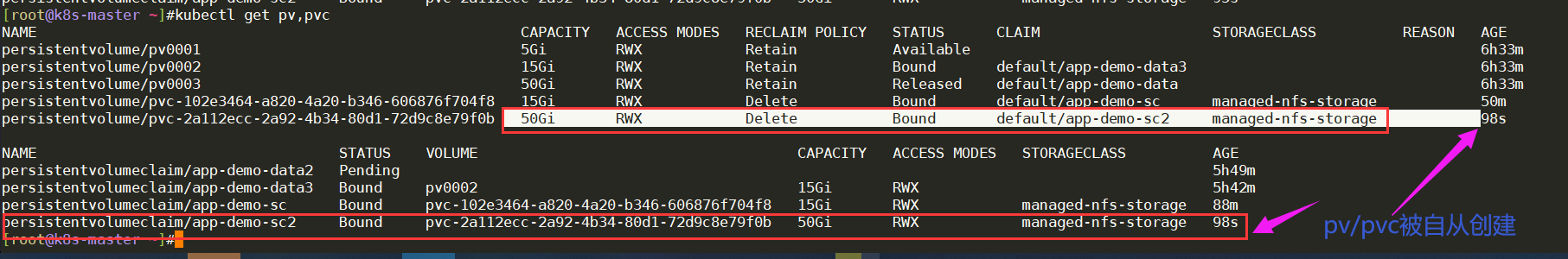

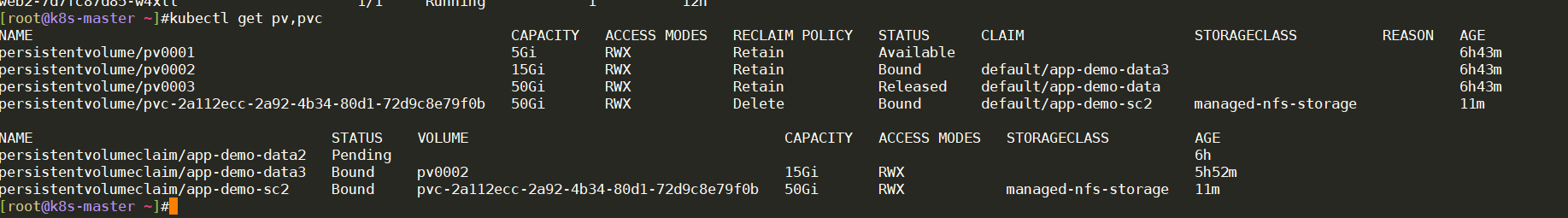

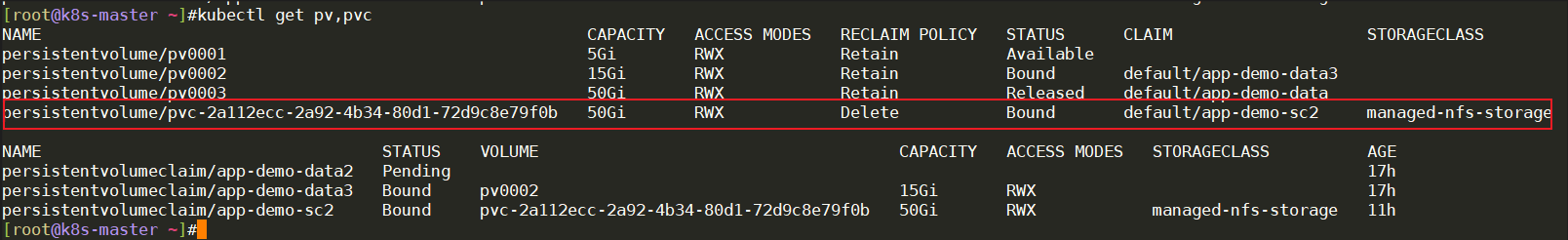

这里查看pv和pvc:这里已经自动创建pv/pvc了。

[root@k8s-master ~]#kubectl get pv

[root@k8s-master ~]#kubectl get pvc

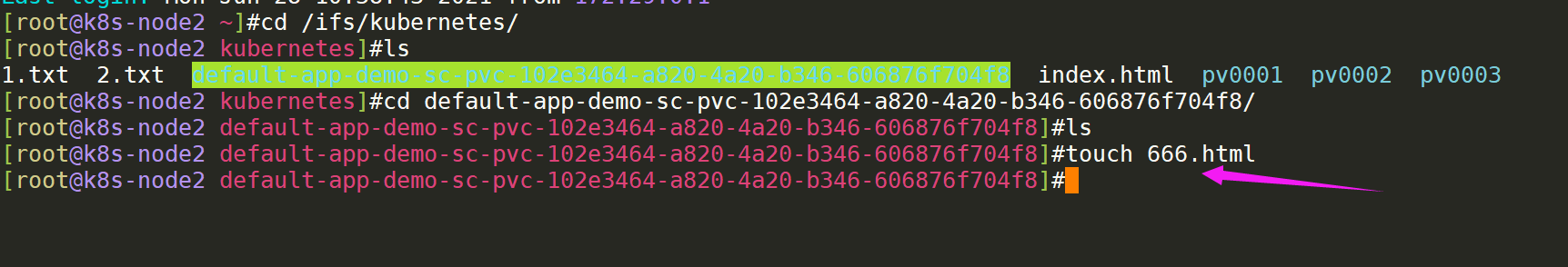

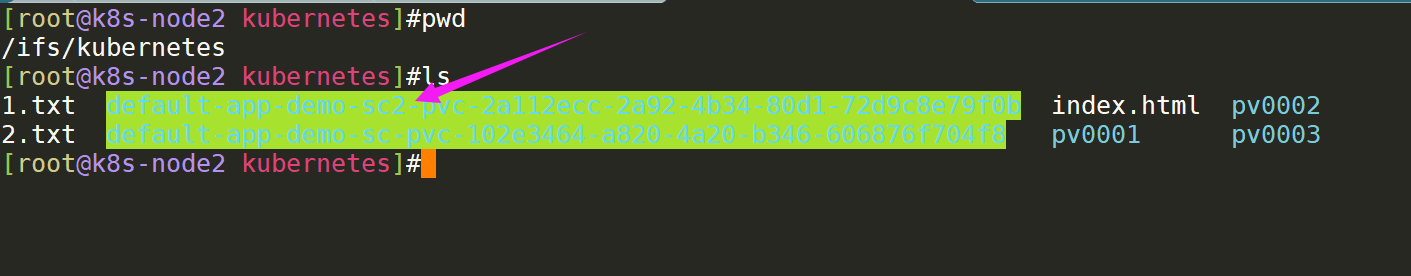

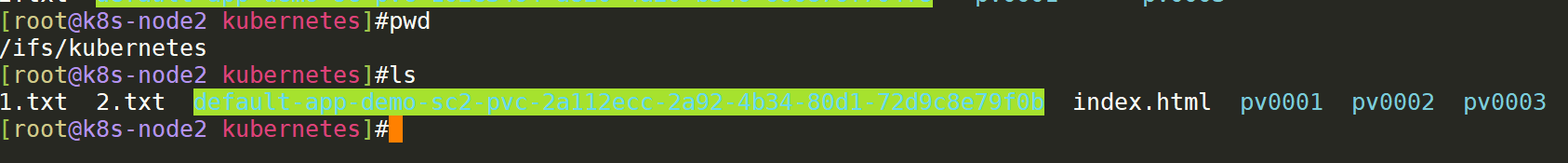

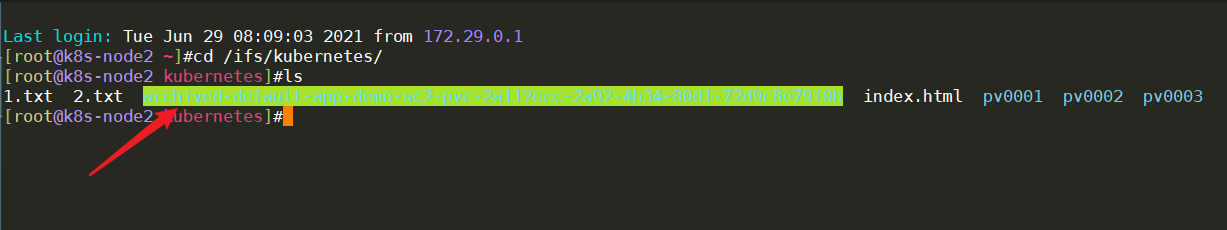

再到nfs server挂载点查看效果:

发现**/ifs/kubernetes挂载点目录下自动被创建了目录**,此时,我们在这里新建测试文件,然后到pod里对应挂载点目录下看是否会出现测试文件:

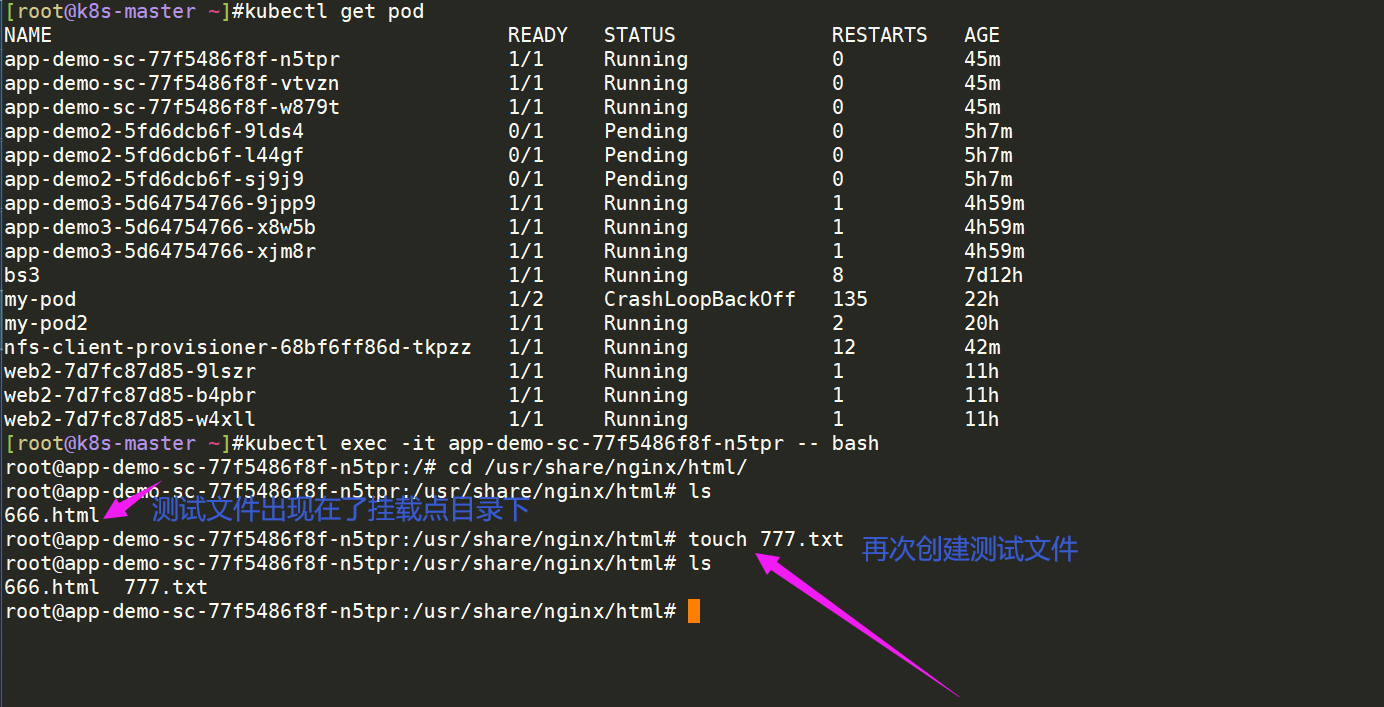

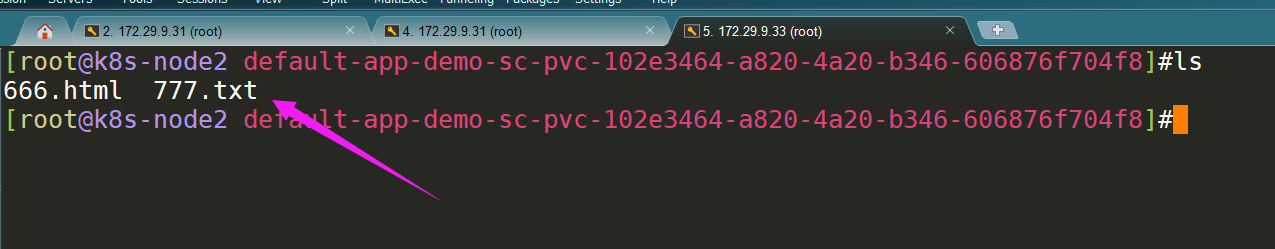

进到pod查看测试文件是否存在:

再到这组pod的其他pod里查看测试文件是否存在:

到此,通过这种PV动态供给(StorageClass)的方式实现pod间数据共享的功能。

=>你每部署一个,它都会给你动态部署一个,非常方便。

- 再次测试效果:这次需求改为50G;

[root@k8s-master ~]#cp deployment-sc.yaml deployment-sc2.yaml

[root@k8s-master ~]#vim deployment-sc2.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: app-demo-sc2

spec:

replicas: 3

selector:

matchLabels:

app: web

strategy: {}

template:

metadata:

labels:

app: web

spec:

containers:

- image: nginx

name: nginx

resources: {}

volumeMounts:

- name: data

mountPath: /usr/share/nginx/html

volumes:

- name: data

persistentVolumeClaim:

claimName: app-demo-sc2

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: app-demo-sc2

spec:

storageClassName: "managed-nfs-storage"

accessModes:

- ReadWriteMany

volumeMode: Filesystem

resources:

requests:

storage: 50Giapply下并查看:

[root@k8s-master ~]#kubectl apply -f deployment-sc2.yaml

deployment.apps/app-demo-sc2 created

persistentvolumeclaim/app-demo-sc2 created

[root@k8s-master ~]#kubectl get pod

[root@k8s-master ~]#kubectl get pv,pvc #查看pv,pvc是否被自动创建

同样再到nfs server挂载点查看效果:同样也会被自动创建的。

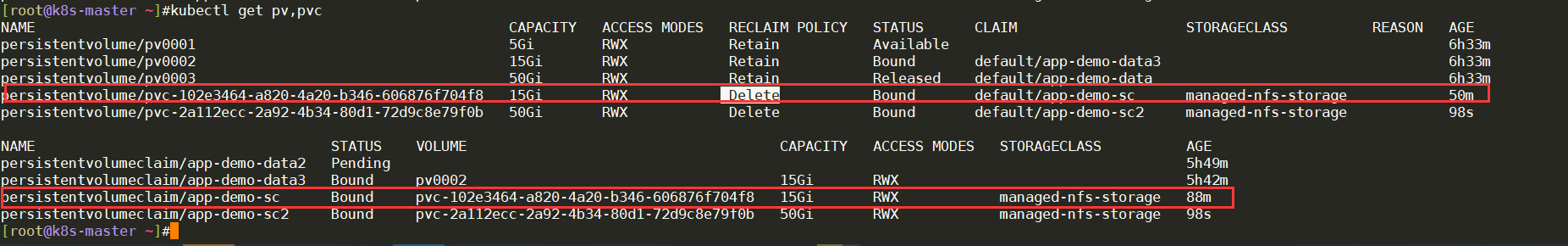

5.测试RECLAIM POLICY(回收策略)效果

- 现在,我把第一个deployment-sc.yaml给删除,看会产生什么效果?

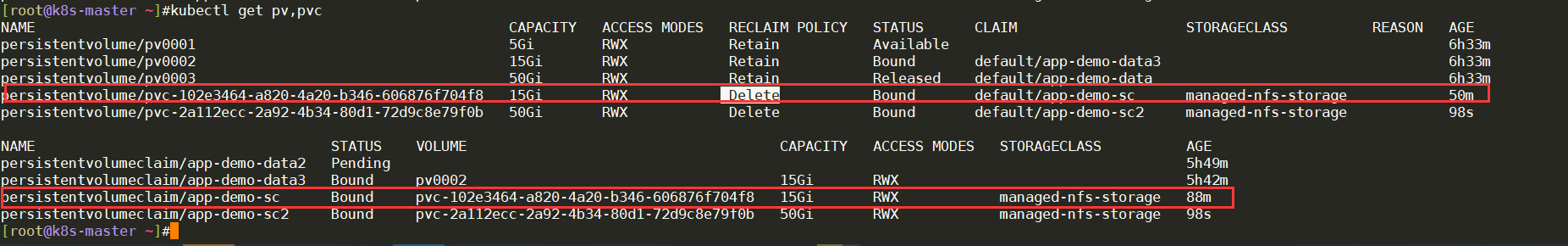

当前pv,pvc情况:

进行删除deployement-sc.yaml操作:

[root@k8s-master ~]#kubectl delete -f deployment-sc.yaml

deployment.apps "app-demo-sc" deleted

persistentvolumeclaim "app-demo-sc" deleted

[root@k8s-master ~]#查看pod现象:app-demo-sc pod已经被删除。

查看pv/pvc情况:发现原来创建的pv,pvc均已经被删除了。

发现其后端存储也被直接删除了:

因为这个nfs插件的RECLAIM POLICY(回收策略)默认为Delete:

• Delete(删除):与 PV 相连的后端存储同时删除

测试完成。

6.更改nfs插件"是否归档"选项并测试

[root@k8s-master ~]#cd nfs-external-provisioner/

[root@k8s-master nfs-external-provisioner]#ls

class.yaml deployment.yaml rbac.yaml

[root@k8s-master nfs-external-provisioner]#vim class.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner # or choose another name, must match deployment's env PROVISIONER_NAME'

parameters:

archiveOnDelete: "false" #这个选项含义是:是否要进行归档?默认不归档。

#这里为了测试,将其改成:true后并保存:

[root@k8s-master nfs-external-provisioner]#cat class.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: managed-nfs-storage

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner # or choose another name, must match deployment's env PROVISIONER_NAME'

parameters:

archiveOnDelete: "true"

[root@k8s-master nfs-external-provisioner]#

#这个class.yaml默认是不能直接apply的:

[root@k8s-master nfs-external-provisioner]#kubectl apply -f class.yaml

The StorageClass "managed-nfs-storage" is invalid: parameters: Forbidden: updates to parameters are forbidden.

[root@k8s-master nfs-external-provisioner]#

#先删除在apply下:

[root@k8s-master nfs-external-provisioner]#kubectl delete -f class.yaml

storageclass.storage.k8s.io "managed-nfs-storage" deleted

[root@k8s-master nfs-external-provisioner]#kubectl apply -f class.yaml

storageclass.storage.k8s.io/managed-nfs-storage created

[root@k8s-master nfs-external-provisioner]#现在我把app-demo-sc2给删除掉,再次观测后端存储是否存在归档现象?

删除操作:

[root@k8s-master ~]#kubectl delete -f deployment-sc2.yaml删除后查看现象:

[root@k8s-master ~]#kubectl get pod

[root@k8s-master ~]#kubectl get pv,pvc #可以看到pod/pv/pvc均被删除

而后端存储侧则进行了归档操作,给你留了一条后路:(如果 想要彻底删除,手动删除后端存储就好了)

注意:归档后的数据无法再关联起来,只能自己手动去处理了。

实验现象符合预期,到此结束。😘

关于我

我的博客主旨:

- 排版美观,语言精炼;

- 文档即手册,步骤明细,拒绝埋坑,提供源码;

- 本人实战文档都是亲测成功的,各位小伙伴在实际操作过程中如有什么疑问,可随时联系本人帮您解决问题,让我们一起进步!

🍀 微信二维码

x2675263825 (舍得), qq:2675263825。

🍀 微信公众号

《云原生架构师实战》

🍀 个人博客站点

🍀 csdn

https://blog.csdn.net/weixin_39246554?spm=1010.2135.3001.5421

🍀 知乎

https://www.zhihu.com/people/foryouone

最后

好了,关于本次就到这里了,感谢大家阅读,最后祝大家生活快乐,每天都过的有意义哦,我们下期见!